We thank Radhika Arora from ON Semiconductor for taking part in the Autonomous Vehicles Interview Series and sharing several insights, including:

Her detailed characterization of the state of Autonomous technology

Her insights on the trends in the Autonomous Vehicle industry

Learn more about autonomous vehicles here: Autonomous Vehicles and the Consumer

How would you characterize the state of Autonomous technology?

These last few years have seen an abundance of new technology introduced in the automotive space, making it an extremely exciting time in this industry. This is a market that, for the last 100 years, has been conservative and strictly bound by set standards. Then came Waymo and Tesla, which completely changed the rules of the game.

Electrification, Autonomous driving, Rideshare and Connectivity set a new frontier in the automotive arena.

Radhika Arora

With all these, we have been promised a future with cars talking with each other to modulate traffic flow, which will help with other aspects of visionary urban planning to minimize traffic congestion, that cars remaining parked on the side of the road would be a thing of the past; instead substituted with more Green space right in the center of the city. Taking stock of where we are today in the Autonomous space, a lot has been achieved but the road remains long and arduous. We are far from having fully driverless cars being widely pervasive across the country despite the promising strides made by the likes of Waymo. There are still some tremendously knotty problems preventing mass adoption of this technology.

Problems lie on the technological front, regulations, infrastructure, and consumer acceptance. Covering 95% of the use case is something achievable and proven by multiple automotive manufacturers. However, solving for the 5%, the edge corner cases continues to be the challenge every engineer working in this field wants to conquer. There is no consensus on the sensor architecture that is proven to flawlessly overcome all road challenges including bad weather, low light or broken traffic signs. Initially, there was a massive influx of investment dollars and talent developing autonomous technology with very aggressive timelines. Those predictions have undergone a reset. In general roads themselves are typically orderly with a predictable environment. However, the humans driving on these roads are anything but predictable. For autonomous vehicles to meet the expectation of being safer than humans, they will have to be able to respond to countless quirks by human drivers. For computers to be able to work past these unexpected conditions is where the ordeal remains. Until we get there, autonomous vehicles will be restricted to geo-fenced areas or limited geographical coverage in cities that have been fully mapped out, test-driven for myriad use cases.

Pittsburgh-based Argo and the Bay Area’s Waymo, both frontrunners in the race to perfect self-driving tech, are solving for this challenge by training their autonomous-drive systems to rely as much on precisely scanned base maps of the road as on sensors used to “paint” the environment around them. This requires constant map updates which limit the ability to go off-grid or ability to scale.

Even though the mass deployment of autonomous vehicles has not been as fast as we predicted, it’s still important to highlight the strides made in technology to make self-driving cars a reality. Computer processing capabilities and artificial intelligence systems are thinking more and more like humans. Nvidia’s latest offering with the DRIVE AGX ORIN platform offers higher horsepower and better efficiency than its current product for Autonomous vehicles and robots. ON Semiconductor’s latest 8 MP – AR0820 offering meets 140 dB in the dynamic range giving the ability to sense objects that have extreme light and shadow in the same scene. This sensor also has advanced features like Cybersecurity giving the added layer of protection against cyber-attacks.

Then there is the forthcoming 5G cellular networks with exponentially faster speeds than 4G. Coupled with this is the power of the cloud that will allow for massive amounts of data to be offloaded for data processing off-site with rigorously updated servers. It allows for regular training, constant updates making for safer driving. Though we expect the cars to be driving by and large without connectivity, having a more robust, faster, higher bandwidth data system will help to enrich self-driving vehicles with enhanced network capabilities.

How vital is the role of sensors in Autonomous technology?

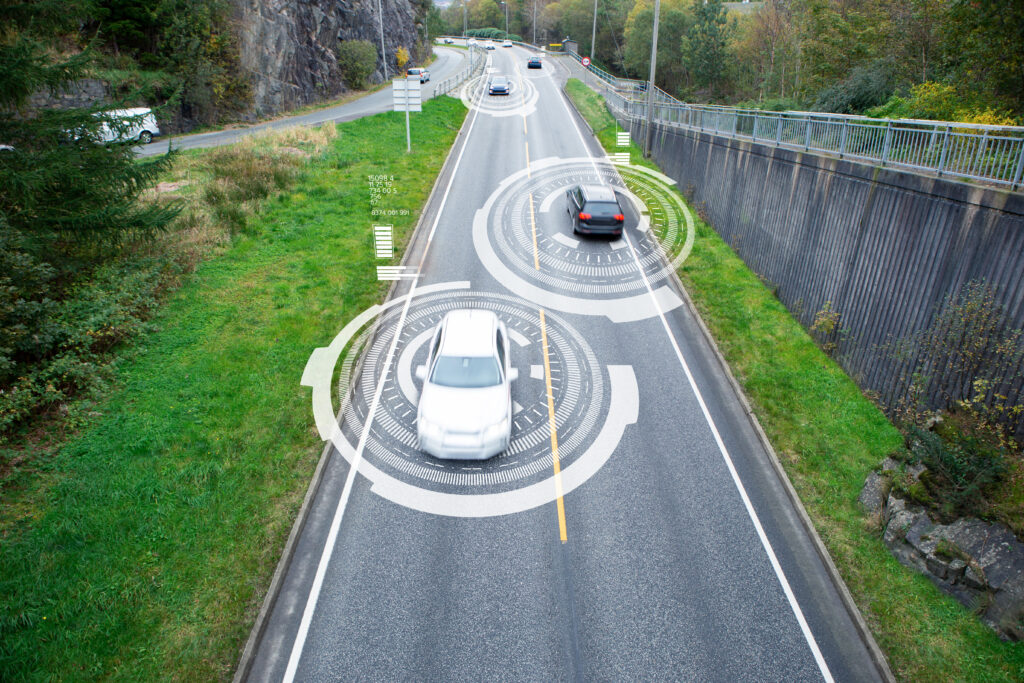

The autonomous car must be able to perform just like a human driver, except that it needs to do it better and safer. The autonomous car must be able to see and interpret what's in front when going forward (and behind when in reverse). It is also necessary to see what is on either side; in other words, it needs a 360⁰ view. Additionally, the car should have the ability to interpret traffic signs, lane markings, construction zone signs, etc. An autonomous vehicle operates in a somewhat partially unknown and dynamic environment; it simultaneously needs to build a map of this environment and localize itself within the map. The input to perform this Simultaneous Localization and Mapping (SLAM) process needs to come from sensors and pre-existing maps created by AI systems and humans.

Passive sensors based on camera technology were one of the first sensors to be used on autonomous vehicles. These cameras rely on CMOS (complementary metal-oxide semiconductor) image sensors, which work by changing the signal received in the 400-1100 nm wavelengths (visible to near-infrared spectra) to an electric signal.

In most cases, the passive sensor suite aboard the vehicle consists of more than one sensor pointing in the same direction. Cameras have the added advantage of providing color fidelity across the entire field of view. This is advantageous when deciphering traffic lights and road signs. There is a trend for both high-resolution sensors providing more pixels per degree and a higher count of cameras as well. This provides the ability for a 360-degree view around the car, critical for Level 3+ autonomy. Cameras today are a mature technology and a cost-effective way to add intelligent sensing to a car. Using cameras alone presents problems as camera performance drops in extreme weather conditions like heavy snow or blizzards, where the cameras might have difficulty perceiving the environment. Extremely low lighting, shadows, and other factors make it challenging to accurately decide what the camera is seeing.

This brings me to the second type of sensor seen in vehicles with high levels of autonomy – Radar. Radar, which is short for radio detection and ranging, is similar to lidar in terms of operation but uses radio waves instead. RF waves have a larger wavelength than Lidar. With the larger wavelength for radar comes the downside, that is the object is much smaller than the wavelength, the object might not reflect enough energy to be detected

Radar has an easier time navigating in harsh weather conditions like snow blizzards or heavy rain, whereas Lidar and cameras tend to have performance degradation. Overall, the main benefits of RADAR are its maturity, low cost, and resilience against low light and bad weather conditions. However, radar can only detect objects with low spatial resolution and without much information about the spatial shape of the object; thus, distinguishing between multiple objects or separating objects by the direction of arrival can be hard. This has put radars more in a supporting role in automotive sensor suites. Imaging radar is particularly interesting for autonomous cars. Unlike short-range radar, which relies on 24GHz radio waves, imaging radar uses higher energy 77-79 GHz waves. This allows the radar to scan a 100-degree field of view for up to a 300 m distance. This technology eliminates former resolution limitations and generates a true 4D radar image of ultra-high resolution.

Another type of sensor used in autonomous vehicles is LIDAR (Light Detection and Ranging). A LIDAR system works by sending thousands of laser pulses every second and creating a 3D point using the reflected light. This is processed to create a 3D representation of the environment /objects. They are highly advantageous for depth perception and 3D mapping

While all the sensors presented have their own strengths and shortcomings, no single one would be a viable solution for all conditions on the road. A vehicle needs to be able to avoid close objects while also sensing objects far away from it. It needs to be able to operate in different environmental and road conditions with challenging light and weather circumstances. This means that to reliably and safely operate an autonomous vehicle, usually a mixture of sensors is utilized. An array of factors is used to determine the best sensor for the job. Some include the scanning range, determining the amount of time you have to react to an object that is being sensed, Resolution, determining how much detail the sensor can give you, Field of view or the angular resolution, determining how many sensors you would need to cover the area you want to perceive, cost, size, and software compatibility, etc.

What trends do you see emerging in the autonomous vehicles industry?

Several autonomous vehicle developers stalled development work at the start of the pandemic in 2020. Some companies leveraged the time to deploy self-driving vehicles like contactless delivery vans, also referred to as Bots. These companies operated in makeshift hospitals for delivering medicines without contact. We saw an upsurge of driverless Bots to deliver goods both on the frontlines and to residents bound by the stay-at-home orders. At the peak of China’s COVID-19 outbreak, half of the country’s population, approximately 760 million people, were living in some form of home lockdown. At the same time, delivery of groceries was as little as 20 minutes away, thanks to the delivery bots. The autonomous vans played a pivotal role in parts of China, delivering pharmaceutical supplies to areas hit hardest by the virus outbreak. They’ve even substituted labor shortages for businesses that have been restricted or forced to cease operations. The Chinese government plans to subsidize as much as 60% of the purchase price to put these useful machines on the ground. In the US, companies like Nuro see a similar boost in engagement in contactless delivery of groceries.

In 2020, we saw a surge in the level of activity and funding in truck automation. There is a lot of media frenzy around solo driverless trucking, but there has been some very promising activity in platooning. We will continue to see this market segment grow with both established OEMs and start-up chip makers to make this a reality. Daimler, Volvo, Waymo, Scania, and even Tesla have deployed on-road prototypes. Commercial level 1 platooning is approved in 27 states and counting in the US. Approved states now encompass over 80% of annual US truck freight traffic. Automated following in a platoon formation will be an accelerated path to Level 4 commercial launch since the driver in the lead truck can still handle complex operating scenarios and also interact with law enforcement as needed.

We should also brace ourselves for further mergers and acquisitions. Collaboration will be the name of the game. Given the challenges and substantial costs associated with developing, building, and training driverless vehicles, incumbents are collaborating to pull resources while offsetting some of the financial burden. In recent months, Amazon acquired Robot Taxi manufacturer Zoox, Aurora bought the autonomous arm of Uber Technologies, Mercedes-Benz and Nvidia announced a partnership to develop an upgradable computing platform to support automated driving, and Volvo announced Waymo will be its exclusive partner for Level 4 vehicles.

What are the new business models in the evolving future of autonomy?

The development of autonomous vehicles in conjunction with the service-based economy can be disruptive, paving the way for a new age of mobility, where ownership of assets is valued less, relative to convenience and user experience, which will drive the business model evolution.

Radhika Arora

Fleet operations will see the first wave of this disruption. A form of it has already been proven by success seen in the Uber & Lyft business model, bringing in a new market of ride-sharing apps. Autonomous driving fleets will feed into a service-based model. For a consumer who needs regular access to a vehicle (work commuters), a licensing model would conceivably work well. Fleets create business models around the frequency of usage and miles consumed. It has been proven in multiple cities in the world, that driving hour limitations create better asset utilization, less downtime, and healthier operating costs. Extend that to ‘autonomous only zones” and you have a city with more open spaces, parking lots replaced with parks, less congestion and efficient movement of people and goods. We are driving towards a future which will change the “one car for all purposes” model.

Autonomous vehicles will generate an unprecedented level of data. Car data can fall into the categories of safety, entertainment, convenience, and cost reduction. Each of these categories will have its unique monetization models driven by collaboration across providers. Autonomous vehicles will transform the car into a platform from which passengers can use their transit time for personal activities. This will usher in new forms of media and services. In-car advertising can make the vehicle a point-of-sale kiosk. In the safety category, car data can enable rescue services if required. Timely road hazard warnings allow drivers to course correct. Automated monitoring systems/predictive maintenance can reduce breakdowns, leading to more convenience and ease of movement. Network-based data analytics, monitoring, and opportunities will exist. C-V2X includes traffic information, real-time mapping, telematics, and data analytics.

Regulators are important stakeholders in the evolving autonomous driving ecosystem. As an example, if taxes are levied based on hours parked/space occupied, business models will shift to minimize idle time. We are at a crossroads between the technology industry guiding regulation and letting regulation direct the future of this industry.

In the era of Autonomous Vehicles, we will see a complete shift from the traditional models of operation. We will see a mix of start-ups, traditional OEMs, Entertainment, Insurance companies all coming together to usher in a new ecosystem driven by safety and convenience.

Radhika Arora